PostgreSQL is a powerful, open source object-relational database system used for OLTP workloads. Mirror supports PostgreSQL as a sink, allowing you to write data directly into PostgreSQL. This provides a robust and flexible solution for both mid-sized analytical workloads and high performance REST and GraphQL APIs. When you create a new pipeline, a table will be automatically created with columns from the source dataset. If a table is already created, the pipeline will write to it. As an example, you can set up partitions before you setup the pipeline, allowing you to scale PostgreSQL even further. The PostgreSQL also supports Timescale hypertables, if the hypertable is already setup. We have a separate Timescale sink in technical preview that will automatically setup hypertables for you - contact support@goldsky.com for access. Full configuration details for PostgreSQL sink is available in the reference page.Documentation Index

Fetch the complete documentation index at: https://docs.goldsky.com/llms.txt

Use this file to discover all available pages before exploring further.

Hosted PostgreSQL

Mirror now supports hosted PostgreSQL as a sink, allowing you to write data directly into a PostgreSQL database hosted by Goldsky via NeonDB. This database will scale as you grow and you’ll always be able to request a data export when and if you need it. This simplifies development and allows you to not worry about database administration. We securely store, host and scale the database for you. After you create a hosted Postgres database, we will display connection information so that you can access your data and run queries on it. You can always reference the sink section of the web dashboard to view connection info for your hosted databases. Please see the pricing page for hosted Postgres pricing.Bring Your Own PostgreSQL

If you’re bringing your own PostgreSQL database, you’ll need to set up the appropriate roles and secrets as described below.Role Creation

Here is an example snippet to give the permissions needed for pipelines.Secret Creation

Create a PostgreSQL secret with the following CLI command:Examples

Getting an edge-only stream of raw logs

This definition gets real-time edge stream of raw logs straight into a postgres table namedeth_logs in the goldsky schema, with the secret A_POSTGRESQL_SECRET created above.

Example PostgreSQL pipeline

Tips for backfilling large datasets into PostgreSQL

While PostgreSQL offers fast access of data, writing large backfills into PostgreSQL can sometimes be hard to scale. Often, pipelines are bottlenecked against sinks. Here are some things to try:Avoid indexes on tables until after the backfill

Indexes increase the amount of writes needed for each insert. When doing many writes, inserts can slow down the process significantly if we’re hitting resources limitations.Bigger batch_sizes for the inserts

Thesink_buffer_max_rows setting controls how many rows are batched into a single insert statement. Depending on the size of the events, you can increase this to help with write performance. 1000 is a good number to start with. The pipeline will collect data until the batch is full, or until the sink_buffer_interval is met.

Temporarily scale up the database

Take a look at your database stats like CPU and Memory to see where the bottlenecks are. Often, big writes aren’t blocked on CPU or RAM, but rather on network or disk I/O. For Google Cloud SQL, there are I/O burst limits that you can surpass by increasing the amount of CPU. For AWS RDS instances (including Aurora), the network burst limits are documented for each instance. A rule of thumb is to look at theEBS baseline I/O performance as burst credits are easily used up in a backfill scenario.

Provider Specific Notes

AWS Aurora Postgres

When using Aurora, for large datasets, make sure to useAurora I/O optimized, which charges for more storage, but gives you immense savings on I/O credits. If you’re streaming the entire chain into your database, or have a very active subgraph, these savings can be considerable, and the disk performance is significantly more stable and results in more stable CPU usage pattern.

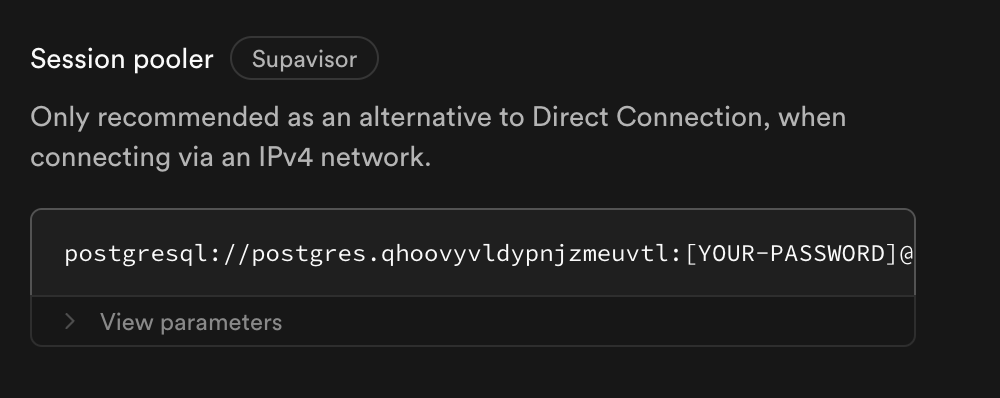

Supabase

Supabase’s direct connection URLs only support IPv6 connections and will not work with our default validation. There are two solutions-

Use

Session Pooling. In the connection screen, scroll down to see the connection string for the session pooler. This will be included in all Supabase plans and will work for most people. However, sessions will expire, and may lead to some warning logs in your pipeline logs. These will be dealt with gracefully and no action is needed. No data will be lost due to a session disconnection.

- Alternatively, buy the IPv4 add-on, if session pooling doesn’t fit your needs. It can lead to more persistent direct connections,