What you’ll need

- A Goldky account and the CLI installed

Install Goldsky's CLI and log in

-

Install the Goldsky CLI:

For macOS/Linux:

For Windows:Windows users need to have Node.js and npm installed first. Download from nodejs.org if not already installed.

- Go to your Project Settings page and create an API key.

-

Back in your Goldsky CLI, log into your Project by running the command

goldsky loginand paste your API key. -

Now that you are logged in, run

goldskyto get started:

-

Install the Goldsky CLI:

For macOS/Linux:

- A basic understanding of the Mirror product

- A destination sink to write your data to. In this example, we will use the PostgreSQL Sink

Introduction

In order to stream all the ERC-20 Transfers of a chain there are two potential methods available:- Use the readily available ERC-20 dataset for the chain you are interested in: this is the easiest and quickest method to get you streaming token transfers into your sink of choice with minimum code.

- Build the ERC-20 Transfers pipeline from scratch using raw or decoded logs: this method takes more code and time to implement but it’s a great way to learn about how you can use decoding functions in case you want to build more customized pipelines.

Using the ERC-20 Transfers Source Dataset

Every EVM chain has its own ERC-20 dataset available for you to use as source in your pipelines. You can check this by running thegoldsky dataset list command and finding the EVM of your choice.

For this example, let’s use apex chain and create a simple pipeline definition using its ERC-20 dataset that writes the data into a PostgreSQL instance:

apex-erc20-transfers.yaml

If you copy and use this configuration file, make sure to update: 1. Your

secretName. If you already created a secret, you

can find it via the CLI command goldsky secret list. 2. The schema and table you want the data written to, by default it

writes to public.apex_erc20_transfers.Building ERC-20 Transfers from scratch using logs

In the previous method we just explored, the ERC-20 datasets that we used as source to the pipeline encapsulates all the decoding logic that’s explained in this section. Read on if you are interested in learning how it’s implemented in case you want to consider extending or modifying this logic yourself. To build the token transfers pipeline from scratch, use theraw_logs Direct Indexing dataset for that chain in combination with Decoding Transform Functions using the ABI of a specific ERC-20 Contract.

Building ERC-20 Tranfers using Decoding Transform Functions

In this example, we will stream all theTransfer events of all the ERC-20 Tokens for the Scroll chain. To that end, we will dinamically fetch the ABI of the USDT token from the Scrollscan API (available here)

and use it to identify all the same events for the tokens in the chain. We have decided to use the ABI of USDT token contract for this example but any other ERC-20 compliant token would also work.

We need to differentiate ERC-20 token transfers from ERC-721 (NFT) transfers since they have the same event signature in decoded data: Transfer(address,address,uint256).

However, if we look closely at their event definitions we can appreciate that the number of topics differ:

- ERC-20:

event Transfer(address indexed _from, address indexed _to, uint256 _value) - ERC-721:

event Transfer(address indexed _from, address indexed _to, uint256 indexed _tokenId)

Pipeline Definition

scroll-erc20-transfers.yaml

If you copy and use this configuration file, make sure to update: 1. Your

secretName. If you already created a secret, you

can find it via the CLI command goldsky secret list. 2. The schema and table you want the data written to, by default it

writes to mirror.erc20_transfers.Transform: scroll_decoded

_gs_fetch_abi function to get the ABI from Scrollscan and pass it as first argument

to the function _gs_log_decode to decode its topics and data. We store the result in a decoded ROW which we unnest on the next transform.

We also limit the decoding to the relevent events using the topic filter and SPLIT_INDEX to only include ERC-20 transfers.

topics LIKE '0xddf252ad1be2c89b69c2b068fc378daa952ba7f163c4a11628f55a4df523b3ef%':topicsis a comma separated string. Each value in the string is a hash. The first is the hash of the full event_signature (including arguments), in our caseTransfer(address,address,uint256)for ERC-20, which is hashed to0xddf252ad1be2c89b69c2b068fc378daa952ba7f163c4a11628f55a4df523b3ef. We useLIKEto only consider the first signature, with a%at the end, which acts as a wildcard.SPLIT_INDEX(topics, ',', 3) IS NULL: as mentioned in the introduction, ERC-20 transfers share the sameevent_signatureas ERC-721 transfers. The difference between them is the number of topics associated with the event. ERC-721 transfers have four topics, and ERC-20 transfers have three.

Transform: scroll_clean

event_params and event_signature from the result of the decoding. We then filter the query on:

decoded IS NOT NULL: to leave out potential null results from the decoderdecoded.event_signature = 'Transfer': the decoder will output the event name as event_signature, excluding its arguments. We use it to filter only for Transfer events.

Transform: scroll_20_transfers

id, address (if you are syncing multiple contract addresses), sender, recipient, token_id, and value.

id: This is the Goldsky providedid, it is a string composed of the dataset name, block hash, and log index, which is unique per event, here’s an example:log_0x60eaf5a2ab37c73cf1f3bbd32fc17f2709953192b530d75aadc521111f476d6c_18address AS token_id: We use thelowerfunction here to lower-case the address to make using this data simpler downstream, we also rename the column totoken_idto make it more explicit.lower(event_params[1]) AS sender: Here we continue to lower-case values for consistency. In this case we’re using the first element of theevent_paramsarray (using a 1-based index), and renaming it tosender. Each event parameter maps to an argument to theevent_signature.lower(event_params[2]) AS recipient: Like the previous column, we’re pulling the second element in theevent_paramsarray and renaming it torecipient.lower(event_params[3]) AS value: We’re pulling the third element in theevent_paramsarray and renaming it tovalueto represent the amount of the token_id sent in the transfer.

WHERE filter to this query with address you are interested in, like: WHERE address IN ('0xBC4CA0EdA7647A8aB7C2061c2E118A18a936f13D', '0xdac17f958d2ee523a2206206994597c13d831ec7')

Deploying the pipeline

Our last step is to deploy this pipeline and start sinking ERC-20 transfer data into our database. Assuming we are using the same file name for the pipeline configuration as in this example, we can use the CLI pipeline create command like this:goldsky pipeline create scroll-erc20-transfers --definition-path scroll-erc20-transfers.yaml

After some time, you should see the pipeline start streaming Transfer data into your sink.

Remember that you can always speed up the streaming process by

updating the resourceSize of the pipeline

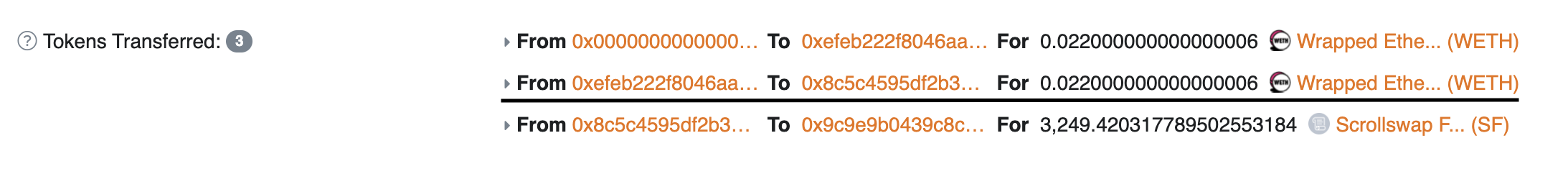

| id | token_id | sender | recipient | value | event_name | block_number | block_hash | log_index | transaction_hash | transaction_index |

|---|---|---|---|---|---|---|---|---|---|---|

| log_0x666622ad5c04eb5a335364d9268e24c64d67d005949570061d6c150271b0da12_2 | 0x5300000000000000000000000000000000000004 | 0xefeb222f8046aaa032c56290416c3192111c0085 | 0x8c5c4595df2b398a16aa39105b07518466db1e5e | 22000000000000006 | Transfer | 5136 | 0x666622ad5c04eb5a335364d9268e24c64d67d005949570061d6c150271b0da12 | 2 | 0x63097d8bd16e34caacfa812d7b608c29eb9dd261f1b334aa4cfc31a2dab2f271 | 0 |